Versions of object detection are all around us. It causes a small amount of outrage on social media when people discover it. You’ve come across it when Facebook or Google managed to locate you in pictures where you weren’t “tagged”.

Facebook draws a box around your face and asks, “Is this you?”. Google does that in albums shared as well. That’s object detection. The big tech companies have managed to locate an object–you–and identify that object. Facebook went a little deep into how they do it, worth a read if you’re so inclined.

Let’s first define what is object detection? It is the result of a series of techniques that recognize and classify objects in images.

It is a model that is capable of identifying places, people, objects and many other types of elements within an image, and drawing conclusions from them by analyzing them.

Let’s describe this in a slightly simplified way.

What does the internet love? Cats. There is a website which identifies cats. It is simply called: Is this a cat? You upload an image and it identifies if what you uploaded is a cat.

The reason behind this is, well, the internet loves cats. But also, somewhere in the background is a model, which is trying to collect all the different images of cats and is learning to identify cats from, well, not cats. It is creating classes and deciding if the image uploaded is a cat or not.

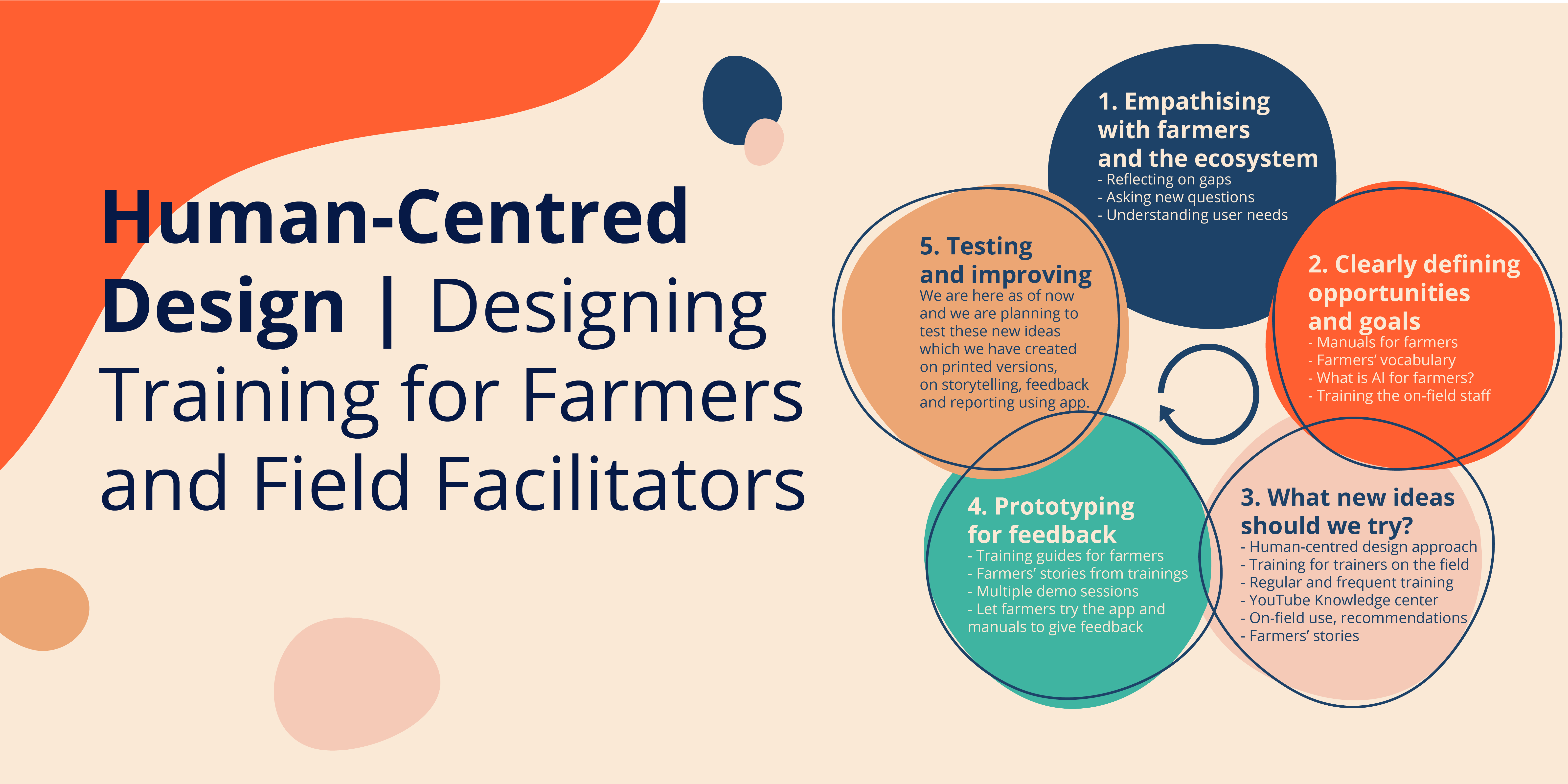

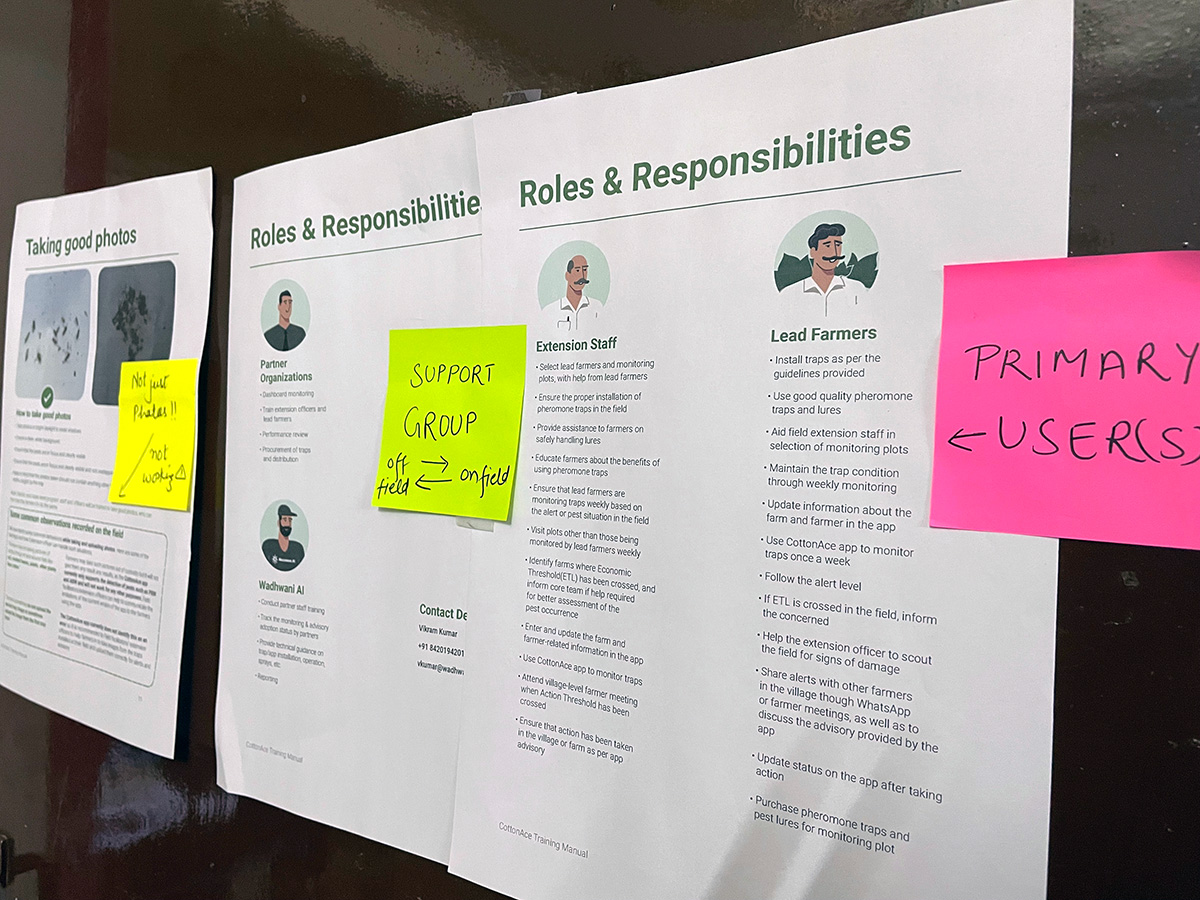

At Wadhwani Institute for Artificial Intelligence, we use object detection differently – To recognize two types of pests, the pink bollworm and the American bollworm. Both these pests can ruin cotton crops across the world. Recently, a news report stated that Pink Bollworm can affect 15% of a farm’s yield.

How does it work?

What can really help the farmer is to know if she needs to take action. That is dictated by the number of pests on the trap. If that number crosses the safety threshold, the farmer needs to take action to stop infestation and prevent her cotton crop from being ruined.

So what happens behind the scenes? The task of the model is to locate the pests, draw boxes and identify them. The aspect ratios of these boxes are not very different. So a default set of boxes with the most common aspect ratios is formed. Most of the boxes around the objects don’t have a lot of different aspect ratios. So, a set of k default boxes (let’s call the set A) will encompass almost all the boxes. A Convolutional Neural Network(CNN) takes in images (NxN) and runs convolutions on top of them. After one set of convolutions the size of the output is reduced.

As the size keeps reducing, the model is outputting higher-level abstract features.

So, we select levels from the multiple convolution sets outputs, this gives us information about different-sized objects from different levels. One of these levels’ output is, let’s say an SxS grid. For every cell, the model tries all default boxes from A and indicates how confident it is for every class [pink bollworm, american bollworm, no object]. So, for every cell you get k default boxes, and for every box you get 3 confidence scores (number of object classes and an extra one for no object) and 4 offset values to exactly locate the box on the grid.

After this, the model refines the box predictions (removing redundant boxes, low confidence boxes, etc.), and returns tight boxes drawn around the objects, in our case, pests.

The farmers now know if there has been a pest attack. The advisory suggests how much and what pesticide can be sprayed to prevent further damage.