For a product manager, launching a new version of an app should not be hard. Updating the UX/UI is exciting. But we operate in a different world. Everything we do needs to be measured. We have to ask the question, “Is this too much change? Will it isolate our user?” My colleagues went into it on a previous post. It is worth checking out. This is the question we asked during our Kharif pilot. We did a summer pilot in Hubli and it had some very interesting results. We have asked an auditor and a university to validate the results for us and when we hear from them, we will announce it.

But for now, let’s talk about the Kharif pilot. Things that were different from the summer pilot. We expanded out of Hubli into four other districts across the country. Currently, our pilot is running in Wardha, Maharashtra, Kutch, Gujarat, Ranga Reddy and Adilabad in Telangana. In summer, some of these farmers had access to irrigation, this time the majority don’t. The type of farmers, the locations and even the soil is different. Last time around, we worked with around 120 farmers. This time we have 700 lead farmers and 17,000 cascade farmers using our app. Cascade farmers are those who get their information from lead farmers. We have partnered with Welspun Foundation and the Deshpande Foundation for our pilot.

A few updates

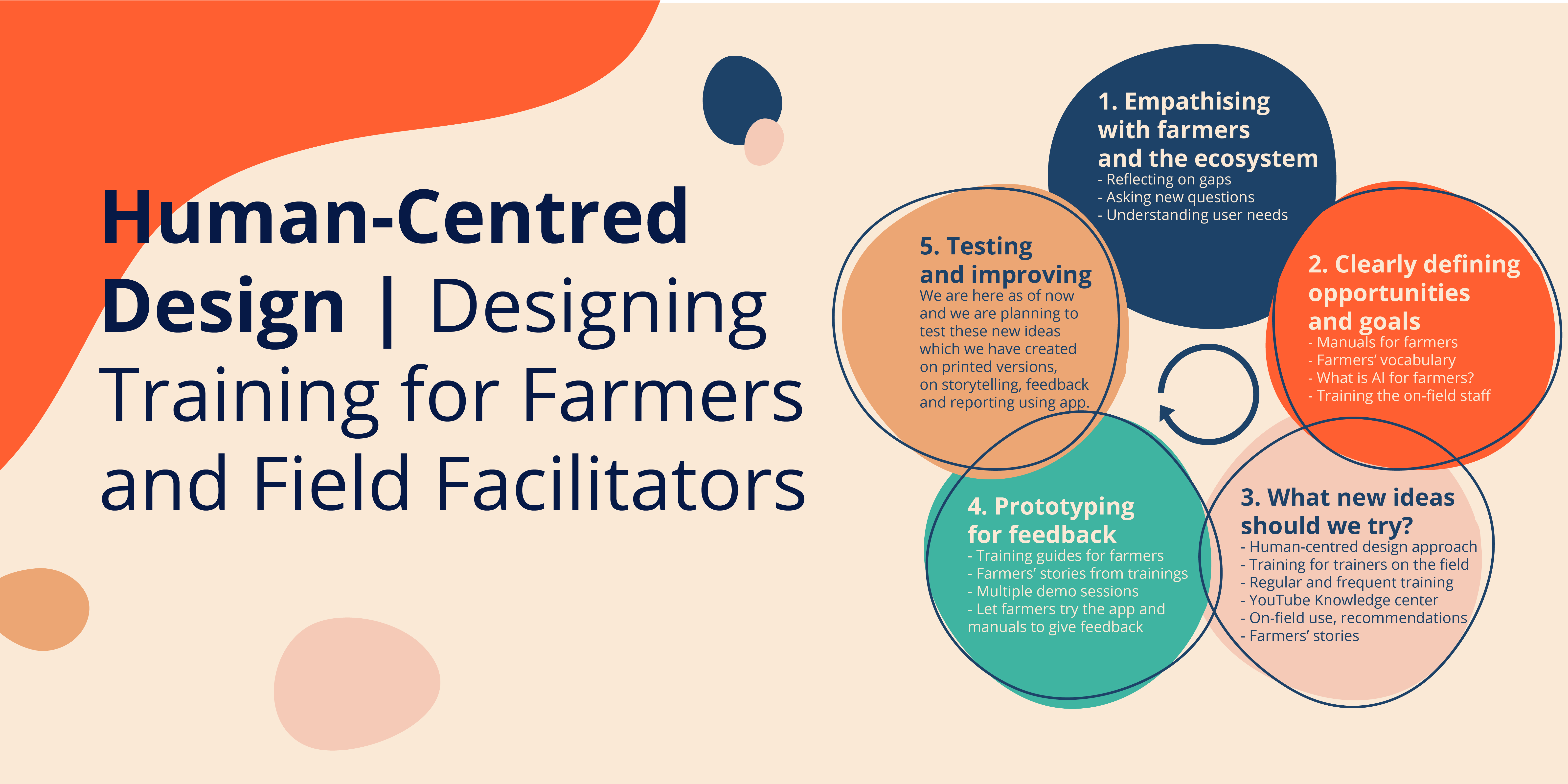

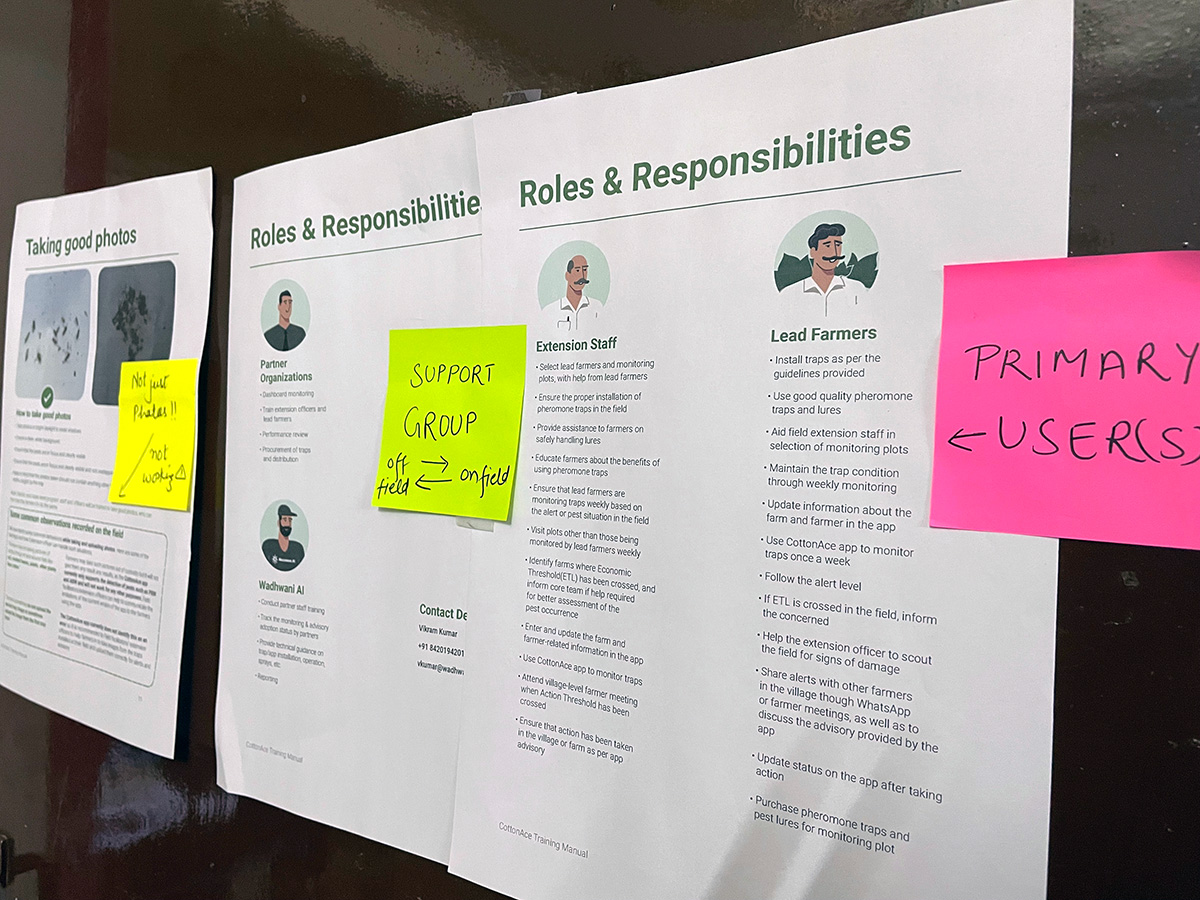

Coming back to the question, how much is too much? In this pilot, we made our model available offline. One of the biggest user needs we had was that because of spotty internet connection, our app struggled. Just to take you through the process again. Farmers set sticky traps across their field. Take pictures of the trapped insects. Now, in the summer, this image would be uploaded to the cloud, where it would be processed and then an alert was generated. We took this offline. Essentially, the image isn’t uploaded to a cloud anymore. But instead, it is processed by the model on the phone. Our research team had a very interesting post on it earlier. The alerts are generated instantly. The roll-out of this app is staged. Currently, only the extension workers and a limited set of lead farmers have this app. Once the bugs are ironed out, we will roll it out to all our farmer partners.

In the summer, we had done extensive research on the challenges farmers faced while using the app. We had to cater to those needs and accordingly revamped the UI for them. Our focus has been on a granular level. Now, Covid-19 prevents us from being present on location and observing the farmers. So, we had to make informed guesses. We plan to go through usage analytics and interviews if their needs have been met.

Another important update as part of the Kharif pilot has been the launch of the dashboard. The idea behind this dashboard is that the program partners can monitor updates in real-time. They can analyse which farmers get red alerts and which have not uploaded in the last week. It also gives the partner a drill down to see the images that farmers have uploaded and the pest count. This dashboard can sound warnings to the partners if there is a widespread infestation at a district level. It is a critical part of our solution. This encourages focussed monitoring. It makes us proactive and not reactive. We believe that the app is just one part of the answer. What we currently have is a high touch model. From a long term sustainability perspective, we need to automate some parts of the process. This dashboard will be critical in optimizing the allocation of resources as we scale.

Lessons learned and new challenges in front of us

There was one complaint in our summer pilot, which we corrected—recommendations. Our recommendations essentially advised the cotton farmers to use a pesticide from a series of many. But this gave no option to organic farmers. We course-corrected there. We were also told that not all kinds of pesticides are available in different parts of the country. That was a valuable learning experience and our recommendation now accounts for that as well. Another key piece of information we incorporated was the time of sowing. This dictates how much the farmer needs to spray.

We have also come across challenges brought on by nature. Unseasonal and erratic rainfall ruined a lot of fields, this meant a small subset of farmers abandoned their crop. This translated to them not taking pictures anymore. While unfortunate, it is an important data point for us.

If looked at in its entirety both pilots have taught us a lot about how farmers react to technology and how closely they follow instructions on the app. While our real validation will come soon, this Kharif pilot has given us a lot of knowledge, which will build for the vulnerable in India. We will chalk this up as another win.